Approach:

This week marked the beginning of the next 4-5 weeks of further developing my visual-audioizer project. I also spent time this week working towards writing for a Graduate Fellowship (pg 14-15): Link: Global Arts & Humanities Brochure Attached above is the paper I submitted, as well as the current project proposal for the next few weeks. During this week I also attempted to find more scholarly articles dealing sound and animation - it's been somewhat difficult finding any relevant information that could be used towards my research. I seem to keep returning to Paul Wells, Normal McLaren, Peter Greenway, and recently found Rebecca Coyle. I also took this week as a chance to familiarize myself more with the topic I'm working with. As stated in my previous journal, I decided that the relationship between my sound and visuals should be reliant on each other, rather than equivocal/synonymous/related. Choices Made: This week I decided to further evaluate how to split up the amplitude of sounds within my patch. First, I had to determine the width/height of the composition that the patch was rendering and send that information to the synthesizer. To clarify: Find/Set the dimensions of Video/Webcam >> Find/track the blobs on the screen >> find the total number of visible blobs on the screen >> send this data for individual blobs to Scalar value >> attribute this to a number and eventually an amplitude adjustment >> attribute this to a divisional operator that equally distributes a total amplitude range to total amount of pixels noticed on screen >> output data to synthesizer that equally shows the correct blob on another screen. Easy enough, right? Inspirational Sources: Unfortunately I haven't had the best of luck when it comes to finding information about the subject. Most of it is dealing with experiments that individuals have gone through, but not about the synonymous/ambivalent relationship between visuals and audio. I find one by a woman named Rebecca Coyle who examines animation as an audio-visual film form but she instead focuses more on the importance of sound within animated films. Link: "Drawn to Sound" A quote from her article (pg. 4): "Sound cannot be freeze-framed in the same way that images can be presented on the page, despite the best efforts of musicologists to capture dynamic elements by notating melodies and arrangements. Sound is constant movement." I love the ending sentence about sound being "constant movement", because in a literal and figurative sense, this is true. Sound is only created in an environment in which motion is possible and noticeable. If we as a person were able to move our hand back and forth in front of our faces quickly enough, we would eventually create a sound in front of ourselves. In this sense, just clapping our hands creates enough of a vibration for us to hear, as well as us stomping our foot. All sound is created by motion, but not the other way around (I think). This article also has a slew of other references that I will be eventually addressing and finding a stronger connection between the relationship of audio and visuals in the animation realm. Questions Raised & Needs:

Next Steps: I need to do a little catch-up in terms of creating the synthesizer (as mentioned in my previous post). I have started re-working what I already had, because the previous way that I was attempting to implement it was through having all the values simultaneously work through the synth before it would output a sound. I need to have some set values already so that I can work with the synth, and pre-determining these before switching them out with dynamically changing values will help with the creation of a more coherent synthesizer. -Taylor Olsen

*Prototyping example from a screen-capture using the blob-tracking linked to audio output.*

Visual Audioizer - Google Slides

Approach: This week I decided to focus on interactivity for a user's body in some way. Though I didn't think it came out the way that I initially intended with the use of the webcam. I realized through experimenting with what objects it noticed, I could either use a black-on-white or white-on-black array that would give me opposite results of the objects noticed (changing a "<" to ">"). Knowing I had the ability to do this I decided to create a few different tests to see how the interactivity worked. Above you'll notice that the patch is reacting only to the visible 'blobs' in the room, it has the ability to notice a change in position as well. I knew I wouldn't have time to incorporate the RGB-data this time around, but I'm hoping to continue working on this project for the next couple of weeks. I realized that changing the size of the rendered area will also yield different results. This makes deeper/higher notation for the sound output. Choices Made: Regarding the black&white or white&black decision: when I used the notation of black objects, I would draw a small dot on a piece of paper to test and place that where the webcam was noticing it as a single object. For the other (and the video above) I went into a dimly lit room and eventually shut off the lights. Afterwords, I placed a small paper cube over the flashlight on my phone and used it as the only 'noticed' object in the room. if I had my flash fully lit in the room, it would mess with what the webcam was seeing. I suppose finding a dimly lit object (a glowstick, orb of light, glow in the dark bouncy ball?) would work better with the current settings. Even eliminating any small objects within the view of the camera (lights on the speakers in the background, reflections, etc.) would aid in the detection of the main light source.

Inspirational Sources:

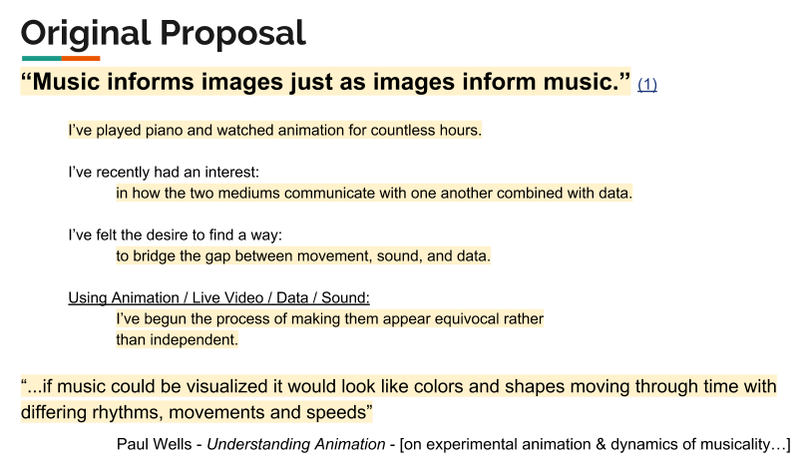

Above is a few quotes and the reasoning behind this 5-week project from a few animators that I seem to keep coming back to. Just like I've come to question, I'm not sure whether or not the imagery/music side is more important in this relationship. The idea that I've been thinking they're in an equivocal relationship is all wrong; I should actually be assuming their relationship is "reliant" upon one another. This creates a whole different feeling rather than having the assumption that I have to make one better than the other. I want to design the patch using both as a means of testing each-other's capabilities (and adjust after) rather than letting one become much more interesting than the other. -- A way I've considered doing this is somewhat similar to drawing a face: one shouldn't draw high detail in one half, but rather take steps on both halves of the face back-and-forth to create and equal relationship between the two.

Questions and Next Steps:

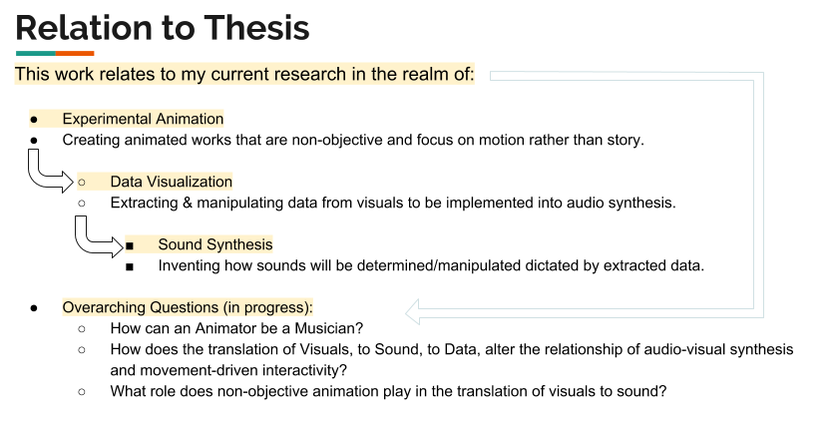

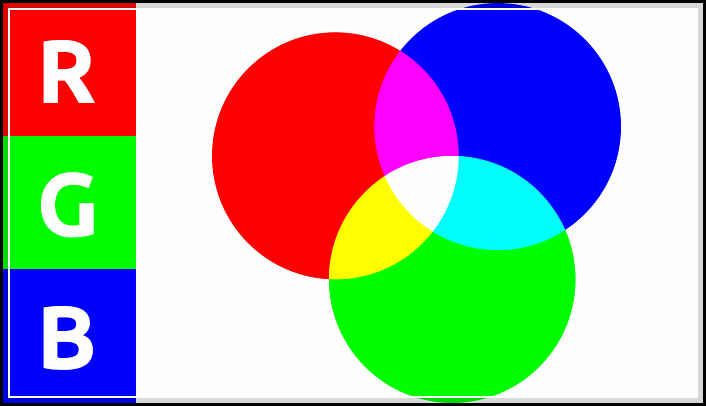

Above is an attempt to show the relationship to my current research topics i'm exploring. This includes: Experimental Animation, Data visualization, and Sound Synthesis. For my current questions above, I think I've yet to come close to answering/showing an example for how an animator can be musician, but in the sense that I could create individual pieces of movement for each sound in a piece of music. I could also do the same and orchestrate something related to body movement, but that will be something to experiment with in the future. Approach: This week I concluded that I have more availability in terms of data collection using patches withing Max/MSP. I wanted to approach this week with understanding that I can take out RGB/greyscale values out of the video process, but it took a while of digging around the internet to find some proper cv.jit (computer vision) videos regarding the process. I also wanted to organize all the elements of the patch itself to make better use of organization elements within Max's visual layout. I also wanted to approach creating a more stable audio patch for the program and build it up piece by piece, rather than make it more complicated than it needs to be right off the bat. Choices Made: I chose to focus on finding reference information and video examples of finding grey-scale/rgb values for this week. We also presented our in-progress work to the rest of our graduate class; I realized that I am a little behind on my schedule but I'm staying on track to have more completed over the break at hand. I want to get the synthesizer built up this week as well as plug in and test different variations of values in terms of change in direction/movement speed. Inspirational Sources: Here are some of the video sources I found below:

The videos above a great examples of using the cv.jit functionality coupled with an RGB screen reader that each respectively could be utilized for my patches. I might heavily consider that because I have more control over one object, that I could create pre-rendered compositions with one solid object as the focus. This allows me to compile tracks on top of one another, and create a composition of my own.

I have also considered the implications of having this patch used as a means of creating unison between dancers. For example, if there was an overhead camera tracking their movements and their hands, elbows, shoulders, were different colors would there be a way for the dancer to hear the unison between each other dancer they happen to be working with. Factually our senses, and in this specific case of hearing vs seeing, we're much more able to hear differences in pressure (sound) than we are with light (visual). There could be different color patches on their shoulders, elbows, knees, etc, to indicate to any computer vision software the extremities of "one" individual. Questions Raised & Needs:

Next steps: My next steps are to keep working within the bounds of data that I can currently collect and assign them accordingly to what I think/will test what is proper values for the synth. I'll begin by building up first the frequency, then move onto amplitude, and amplitude envelope. Approach: SELF RANT: I let myself down this week, meaning I am behind; ironically it appears to be mirroring that of last years attitude towards my own track of research. This topic of sound and motion is worth my time and I should put in the effort for my research and for those who would benefit from it. I consider it to be something that would push my boundaries of what I thought I could initially create, and how these insistent distractions towards me completing my work will eventually go away so I can find time to appreciate what others would gain from my work. I need to do it for myself and that's that. Now that I'm done ranting, back to business. I feel compelled to make things right this next coming week. This last week I explore the use of MIRA in my Max-patch, but found that there is an issue with using the jit.window in the compatibility of the patch loading onto the Ipad screen. This was discouraging as I couldn't find a solution online to how I would solve this issue--but there might be a way to have the video it found stream to another window type and have this window (rather than the jit.window) be the main output of the visuals that are being observed. Choices Made: I chose this week to focus more on my mental health dealing with the overwhelming amount of work being put on me (from my own faults of signing up for studio courses), and regaining the drive that I needed in order to accomplish and catch-up on the work that I didn't complete this week prior. I went ahead (as mentioned) and began using MIRA as a means to make the interface of using Max/MSP/Jitter more friendly to users who weren't familiar with the software. While creating and interacting with the app, I realized that there is a heavy influence of interface design that is also at play here. I went ahead and made many of my objects within the patch larger so there wouldn't be an issue with pressing any buttons that didn't look necessary to touch. I also fiddled with some colors and layout, putting the whole patch on a black background and highlighting the only things that I thought needed to be considered as important. Inspirational Sources: Visual Music - Animation Studies "Music informs images just as images inform music. This sounds simple enough, it is a manifestation of an essential (yet often overlooked) aspect of perception, that of “sense-giving” and of “sense-receiving”...One could paraphrase Norman McLaren and posit that “Visual Music is what happens between the music and the images"...."Yet, if we agree with Maurice Merleau-Ponty (“Perception is constitutive”), we must acknowledge the/our all-important subjectivity as being that which provides (or not) the sense we are talking about, be it given, and/or received. We are therefore confronted by (the experience of) elements that are always in flux: if one is foreground, the other is background, and if our gaze changes, intentionally or not, the priorities change accordingly." This is fascinating to me, as I have considered heavily the influence of what was more important to the visuals and the sound playing off of each other. I hadn't considered that it should be in a constant state of flux between the two, and learning this as a consideration to the process of experimenting alleviates some of my stress to have the audio be the main facet of interesting content. This post also mentions that the "space" between images and music is, "subject to various tensions". This includes being "literal" and "equivocal". In the 'literal' sense, this is based more on the figure/ground differentiation, showing that images and music are two different 'things' to be considered. In the 'equivocal' sense, where music and image are both equals and they can both no longer be identified as separate elements, but coexist with one another within one realm of content. I would also like to thank my professor as a source of inspiration this week. Maria is a considerate and passionately driven individual in the realm of animation and research; she is a constant force for good and makes sound and reasonable decisions for herself, the department, and others. I feel as though I'm lucky to have her around and even be in the same room as her. She knows how to look at/address specific issues in design thinking, and personal woes. I considered most of the work that I was doing as a facet to pleasing others, but I need to STOP doing that. This is for me, this is about what I want to make and how I want to utilize it. Whether or not it is found interesting by others is solely up to them, but there won't BE ANYTHING if I don't get my ass in gear, and work towards my goal.

Questions Raised & Needs:

Next steps: Keep my chin up and work. Take advice from others and relax when necessary. Don't worry about what "could" be, but what I can work with at the time being. Take everything one step at a time, don't overload myself with useless probabilities and focus on what's important: Health, wife/friends/family, curiosity to learn and explore, giving myself some slack (because what I am doing isn't easy), finding the balance, following a schedule, feeding the cat-brats. -Taylor Olsen |

All PostsArchives

May 2020

Blog ContentsInterests: |

||||||||||||||||