|

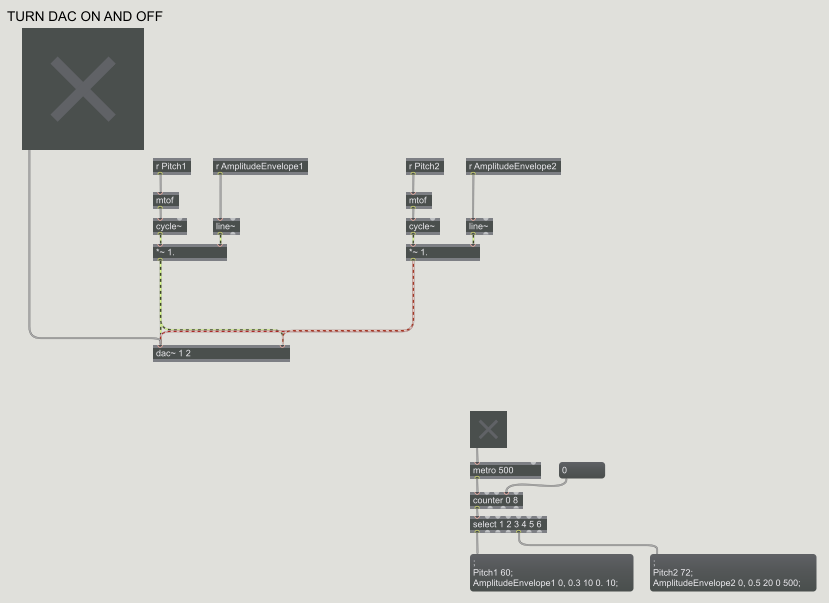

*A Max/MSP patch with the inputs of Frequency and Amplitude.* LINK TO PREVIOUS WORK Approach: For the beginning of this week, I decided to continue with my previous exploration path: the visual "Audio-izer" (link above). I want to use this as a continuation to my next phase of projects that include understanding the fundamentals of sound and visual synthesis, as well in finding new ways to explore animated loops. During my music synthesis class, we've been studying the basic physics of sound properties and how they're made; and much in relation to my field of study, this interests me greatly as I go through the same process--but there are many connections to be made between sound and visuals. Below you can find the PDF of my current proposal.

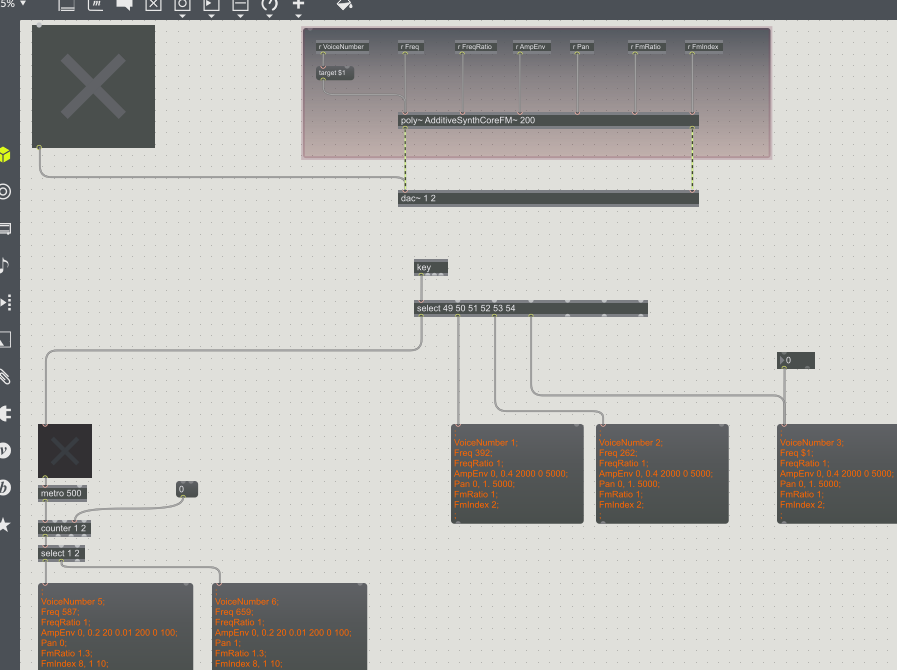

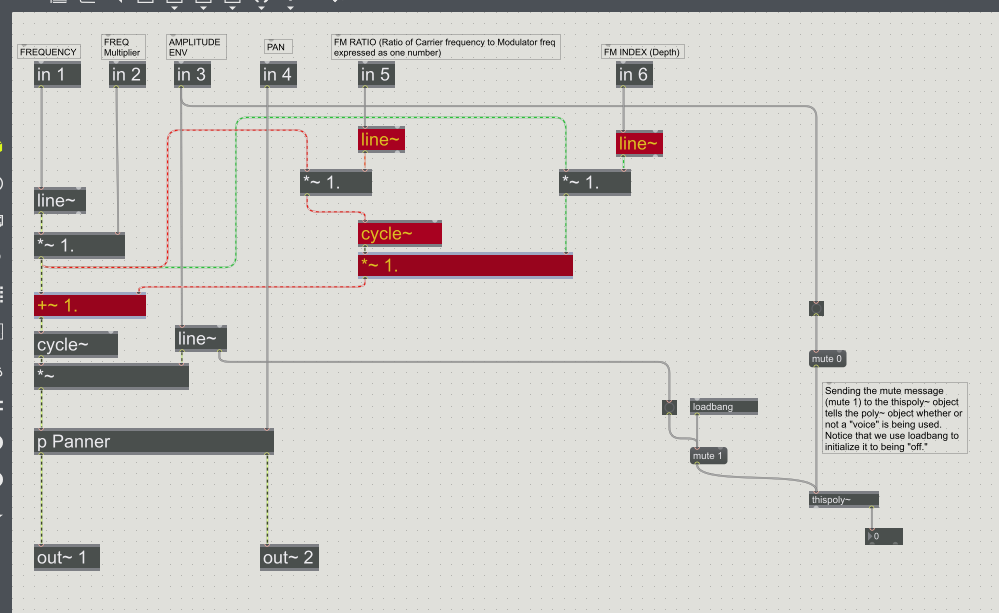

*A little more complex Max/MSP patch with the inputs of Amplitude Envelope, Frequency, Modulation, Ratio'd frequencies, and Frequency Modulation Indexes.* Choices Made: For this week I decided to return back to working with Max. I didn't get as far as I'd thought in my progress until realizing that understanding the basics of sound and how to produce it is exactly what was needed for my research. I've gained a better understanding of how sound waves are produced, and was left with a very basic and easy to understand reference found through online resources: What is Sound? *An internal patch (referred to in this case as associated with a "poly" patch) used in the creation of the sounds for the previous image shown. Basically the inner workings of a patch, almost looks like a circuit board in my mind.* Inspirational Sources:

For this week I found an online post of a group who experimented with the synthesis of visuals and sound. I found one very interesting correlation between how they were using sound to drive the visuals: Audio Visual Synthesis "After playing with AV in many ways for some years we have concluded that the relationships between Audio and Visual streams are plastic, ie. not fixed. So in AVS there is no absolute correct mapping for e.g. colour frequency to sound frequency. The mappings can be set as required, creating relationships. Some relationships work better than others. As in real life relationships, using intuition and trial and error we (sometimes) home in on the ones that work best… " I think this quote is very important as I was never sure how I would implement what color was created by what sound. There's also the consideration of what I've began to elaborate more on: the relationship between sound and visuals is always inevitable because in simplest terms, they're both made out of wave-forms. In this sense both can have extracted values of color-to-sound that would be multiples of the frequencies presented (I would have to calculate the math, but something to consider). Questions Raised & Needs:

This next week I'll be testing these ideas as stated before for the determination of sounds. I also want to explore different ways of making the patch more CPU efficient. I should also play around more with MIRA on an Ipad to see how I can use it as an interactive device. -Taylor Olsen Comments are closed.

|

All PostsArchives

May 2020

Blog ContentsInterests: |

||||||