|

*Prototyping example from a screen-capture using the blob-tracking linked to audio output.*

Visual Audioizer - Google Slides

Approach: This week I decided to focus on interactivity for a user's body in some way. Though I didn't think it came out the way that I initially intended with the use of the webcam. I realized through experimenting with what objects it noticed, I could either use a black-on-white or white-on-black array that would give me opposite results of the objects noticed (changing a "<" to ">"). Knowing I had the ability to do this I decided to create a few different tests to see how the interactivity worked. Above you'll notice that the patch is reacting only to the visible 'blobs' in the room, it has the ability to notice a change in position as well. I knew I wouldn't have time to incorporate the RGB-data this time around, but I'm hoping to continue working on this project for the next couple of weeks. I realized that changing the size of the rendered area will also yield different results. This makes deeper/higher notation for the sound output. Choices Made: Regarding the black&white or white&black decision: when I used the notation of black objects, I would draw a small dot on a piece of paper to test and place that where the webcam was noticing it as a single object. For the other (and the video above) I went into a dimly lit room and eventually shut off the lights. Afterwords, I placed a small paper cube over the flashlight on my phone and used it as the only 'noticed' object in the room. if I had my flash fully lit in the room, it would mess with what the webcam was seeing. I suppose finding a dimly lit object (a glowstick, orb of light, glow in the dark bouncy ball?) would work better with the current settings. Even eliminating any small objects within the view of the camera (lights on the speakers in the background, reflections, etc.) would aid in the detection of the main light source.

Inspirational Sources:

Above is a few quotes and the reasoning behind this 5-week project from a few animators that I seem to keep coming back to. Just like I've come to question, I'm not sure whether or not the imagery/music side is more important in this relationship. The idea that I've been thinking they're in an equivocal relationship is all wrong; I should actually be assuming their relationship is "reliant" upon one another. This creates a whole different feeling rather than having the assumption that I have to make one better than the other. I want to design the patch using both as a means of testing each-other's capabilities (and adjust after) rather than letting one become much more interesting than the other. -- A way I've considered doing this is somewhat similar to drawing a face: one shouldn't draw high detail in one half, but rather take steps on both halves of the face back-and-forth to create and equal relationship between the two.

Questions and Next Steps:

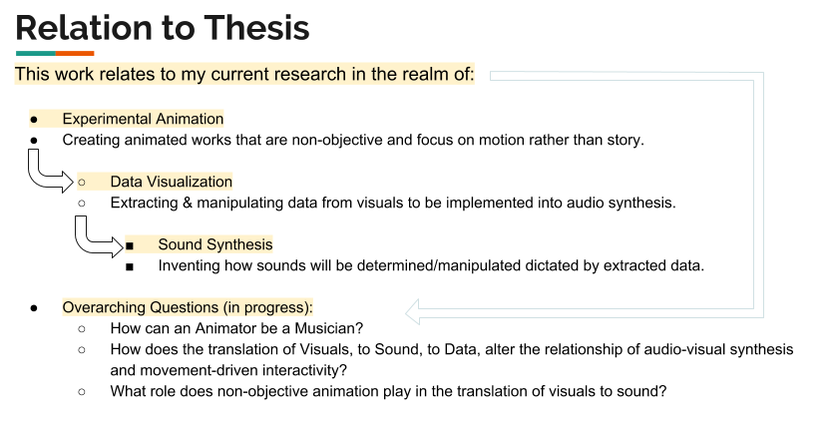

Above is an attempt to show the relationship to my current research topics i'm exploring. This includes: Experimental Animation, Data visualization, and Sound Synthesis. For my current questions above, I think I've yet to come close to answering/showing an example for how an animator can be musician, but in the sense that I could create individual pieces of movement for each sound in a piece of music. I could also do the same and orchestrate something related to body movement, but that will be something to experiment with in the future. Comments are closed.

|

All PostsArchives

May 2020

Blog ContentsInterests: |