|

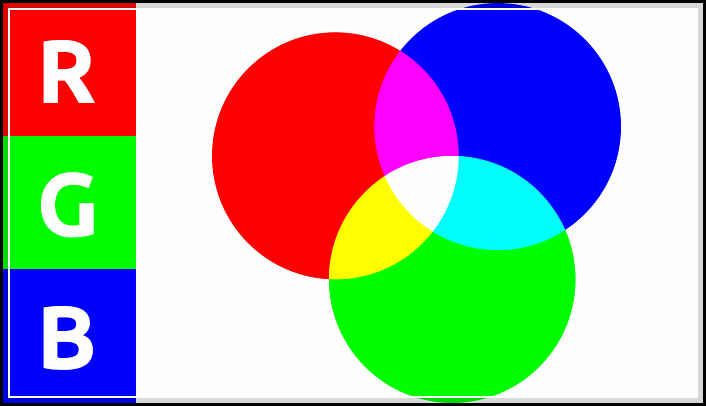

Approach: This week I concluded that I have more availability in terms of data collection using patches withing Max/MSP. I wanted to approach this week with understanding that I can take out RGB/greyscale values out of the video process, but it took a while of digging around the internet to find some proper cv.jit (computer vision) videos regarding the process. I also wanted to organize all the elements of the patch itself to make better use of organization elements within Max's visual layout. I also wanted to approach creating a more stable audio patch for the program and build it up piece by piece, rather than make it more complicated than it needs to be right off the bat. Choices Made: I chose to focus on finding reference information and video examples of finding grey-scale/rgb values for this week. We also presented our in-progress work to the rest of our graduate class; I realized that I am a little behind on my schedule but I'm staying on track to have more completed over the break at hand. I want to get the synthesizer built up this week as well as plug in and test different variations of values in terms of change in direction/movement speed. Inspirational Sources: Here are some of the video sources I found below:

The videos above a great examples of using the cv.jit functionality coupled with an RGB screen reader that each respectively could be utilized for my patches. I might heavily consider that because I have more control over one object, that I could create pre-rendered compositions with one solid object as the focus. This allows me to compile tracks on top of one another, and create a composition of my own.

I have also considered the implications of having this patch used as a means of creating unison between dancers. For example, if there was an overhead camera tracking their movements and their hands, elbows, shoulders, were different colors would there be a way for the dancer to hear the unison between each other dancer they happen to be working with. Factually our senses, and in this specific case of hearing vs seeing, we're much more able to hear differences in pressure (sound) than we are with light (visual). There could be different color patches on their shoulders, elbows, knees, etc, to indicate to any computer vision software the extremities of "one" individual. Questions Raised & Needs:

Next steps: My next steps are to keep working within the bounds of data that I can currently collect and assign them accordingly to what I think/will test what is proper values for the synth. I'll begin by building up first the frequency, then move onto amplitude, and amplitude envelope. Comments are closed.

|

All PostsArchives

May 2020

Blog ContentsInterests: |